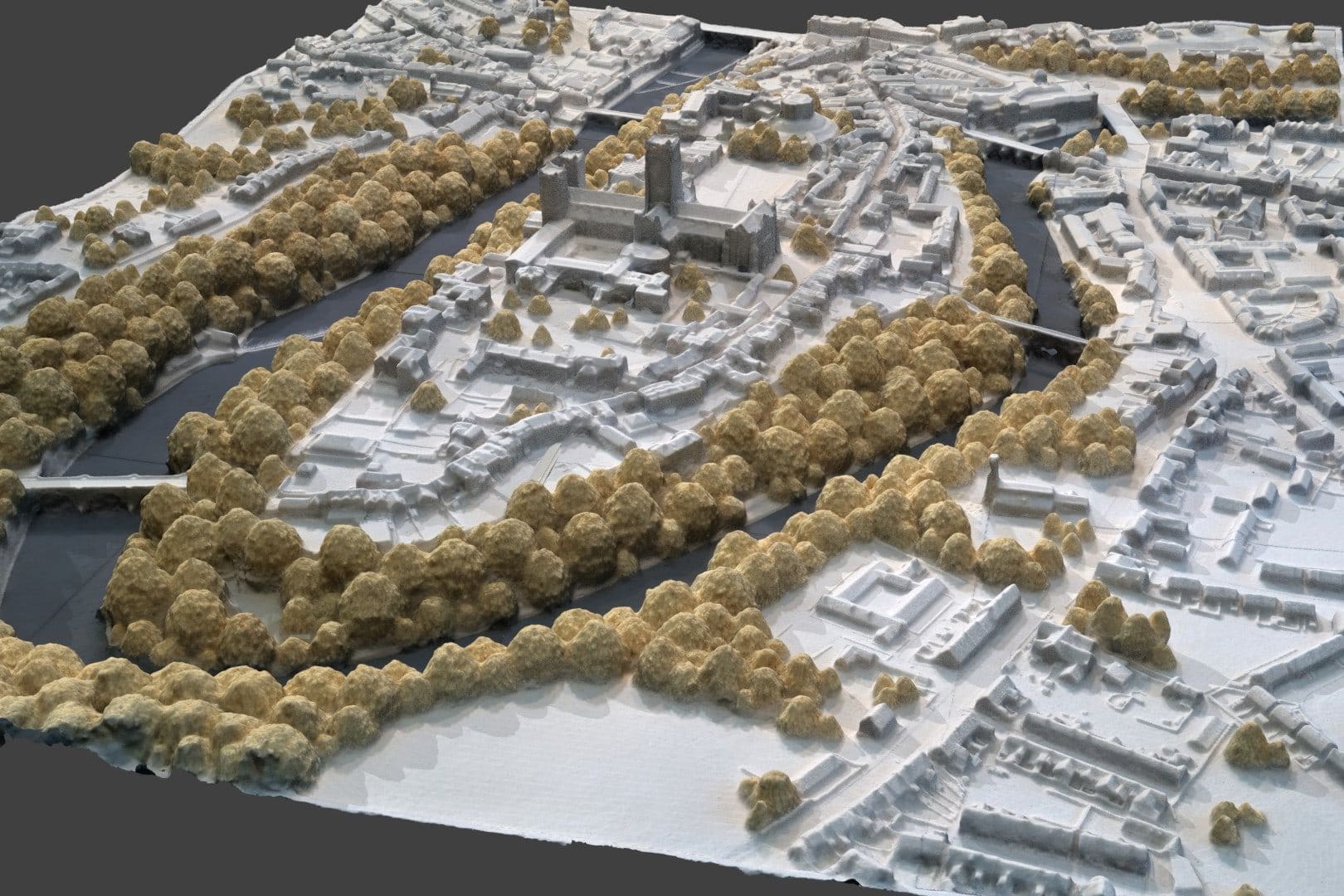

Durham 3D might be a miniature photogrammetry model, but its small size belies some statistics that are anything but.

The number of photographs, the processing time and the volume of vertices produced in the final model make the Durham 3D peninsula model the largest and most interesting photogrammetry project that ExplorAR has taken on so far.

Photogrammetry is the process of turning photographs into 3D models of objects. By ensuring that you take enough pictures to cover the entire object, and that the photographs have enough overlap, then photogrammetry software can start searching for common points with which to align the images. By seeing how the points have changed between pictures (parallax), the software is then able to start placing those points in 3D space, before overlaying a skin, or ‘mesh’, to recreate the object.

Here’s a slimmed-down version of Durham 3D, which lets you see Durham's key heritage locations; drag your finger/mouse to turn it around and pinch/scroll to zoom:

We’ve had some fun with all sorts of photogrammetry projects in the past, from gravestones at Durham Cathedral and Newcastle Cathedral, to precious artefacts inside Hexham Abbey. But the Durham 3D model is different for a number of reasons.

The basis for Durham 3D is actually a physical model of the peninsula, which you can find in the Durham World Heritage Site visitor centre on Owengate, the cobbled path leading from Palace Green in front of Durham Cathedral. While this certainly made the first part of the project simpler – without a model the project would have required drone mapping to cover the entire peninsula area – it also made other aspects more difficult.

Firstly, the model is a large tabletop size, meaning that there is an immense amount of detail packed into a pretty small space; the only way to ensure that all of this detail was captured was to take a lot of photographs, taken very closely together, to ensure every angle of the miniature buildings and bridges was covered. The golden rule in photogrammetry is that the computer can’t make a model from something it can’t see – in other words, if you haven’t photographed something, it’ll end up as a hole in the final model. This becomes a lot harder when the object is very detailed.

The other main problem with Durham 3D was that the entire model is covered in a permanent perspex box; aside from limiting how close you can get to the model, it also introduces the other arch nemesis of good photogrammetry: reflection. Reflective surfaces are notoriously difficult to deal with when creating 3D models because the brightness and reflections confuse the software; it struggles to find common points.

To ensure the detail and all angles were adequately covered when creating Durham 3D, the only way was to move the camera only a couple of centimetres between each picture, and by taking the majority of the photographs looking directly down on the model rather than from, for instance, a 45 degree angle; this ensured we could get the detail between buildings which would otherwise occlude each other if pictured from the side. The end result was 20 rows of 50 pictures each, leading to 1,000 photographs – not too shabby for a tabletop object!

For the reflection, creating a setup to place the camera as close as possible to the perspex was the only way to eliminate all but the most stubborn of reflections.

The subsequent processing – even on a computer that’s no slouch with an i9 processor chip – took around 16 hours with accuracy levels at high setting. The computer was working so hard that someone actually came over to find out what the loud whirring noise was; they thought the air conditioning must be broken.

What makes it really fun is when your computer gets about 8 hours into the render and only then decides to tell you that it won’t have enough memory to complete the job.

The majority of that time was the generation of the dense point cloud, which is like a 3D model made up of all the common points the software has found between photographs, placed in 3D space. From this, the software is able to build a mesh; models are based on faces, which are triangles it draws between the points. The more points, the more faces, and the more faces, the more detailed and accurate the final mesh.

Yeah…this all gets rather complicated unless you’re the kind of person who gets sweaty when you see cool camera equipment and software; but suffice to say, the final Durham 3D model ended up with 3,198,026 faces. The 16 hours of processing was split over several days, and the initial model was 267mb.

Although it was a big undertaking, the Durham 3D model gives us an easily shareable, permanent record of a view that we’d otherwise struggle to see, at a fraction of the cost that would otherwise be involved with aerial mapping.

You can see the model in action on the tablet app given to visitors of Durham Cathedral’s North West Tower tour, and on the big screen in the reception of the Durham World Heritage Site visitor centre.

If you'd like a 3D model creating, then get in contact with us.